Balancing Product Development and Security Protocols

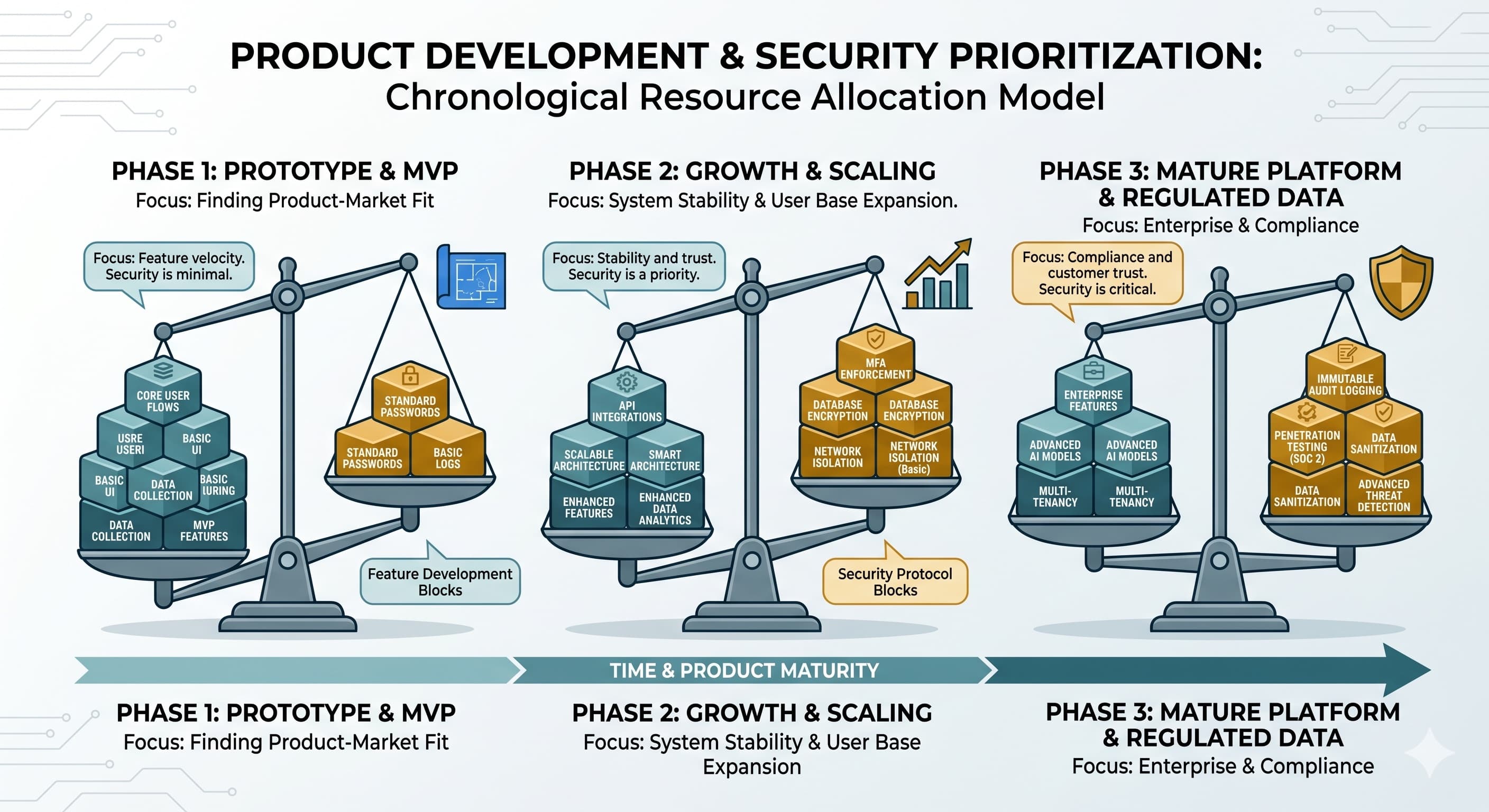

Allocating engineering hours between features and security depends on what data the system handles. Mapping defenses to project milestones aligns spending with actual threats.

Building software requires allocating engineering hours between feature development and infrastructure protection. Establishing an initial security baseline limits system vulnerabilities during early user acquisition. Transitioning from a prototype to a production environment introduces new operational risks. Engineering teams monitor specific project milestones, such as processing financial transactions or entering enterprise procurement, to determine necessary architectural modifications. Deploying targeted defenses at these specific development stages aligns capital expenditure with concrete threat models.

Encryption at Rest

Encryption at rest scrambles data stored on hardware, rendering database servers unreadable. Storing public information, such as weather dashboards, forum posts, or marketplace catalogs, negates the requirement for cryptographic keys. Implementing encryption at rest is required when databases store private user details, including passwords, payment tokens, or health records regulated by HIPAA. Cloud providers like AWS and GCP offer this as a toggle setting.

Deep Network Isolation

A virtual private cloud with isolated subnets places databases in restricted network areas that are inaccessible from the public internet. Building a minimum viable product, such as an e-commerce product catalog, allows relying on managed databases or platform-as-a-service providers for network routing. Handling trade secrets, B2B enterprise corporate data, or regulated health data requires isolating the database from the public web. Placing the database in a private subnet limits access to the internal application.

Multi-Factor Authentication

Multi-factor authentication requires secondary evidence, such as an authenticator app code, before logging in. Creating consumer applications intended for daily use, such as news readers or casual games, supports the use of standard passwords or social logins. Forcing secondary codes creates user friction. Operating platforms that facilitate fund transfers, display medical results, or manage enterprise infrastructure demands require multi-factor authentication enforcement. The protection offered outweighs the user friction.

Immutable Audit Logging

Immutable logging, or write-once-read-many storage, establishes a permanent forensic record of software actions. Employees or developers cannot delete these logs. Operating project management tools or e-commerce storefronts allows the use of standard application logs. These logs overwrite over time to reduce storage costs. Functioning within regulated industries requires unalterable proof to answer SEC or HIPAA auditors. Immutable logs act as a legal insurance policy.

Penetration Testing

Penetration testing simulates hacker actions to identify application vulnerabilities. Developing a product before finding product-market fit involves frequent code rewrites, rendering early testing a misallocation of capital. Selling to enterprise clients or pursuing SOC 2 or ISO 27001 certifications introduces the requirement for third-party penetration test reports. Enterprise procurement teams frequently request these documents.

Data Sanitization

Data sanitization strips personally identifiable information before the data is used for analytics or AI training. Analyzing aggregated product metrics, such as geographic traffic or button-click rates, proceeds without data-masking pipelines. Integrating large language models or sharing data with third-party vendors requires removing names and Social Security numbers from user prompts. This prevents private data ingestion by AI providers.

Rate Limiting

Rate limiting restricts the volume of requests a single IP address can make within a timeframe. Testing tools with a small user group proceed without throttling rules. Exposing applications to the public internet, particularly login pages, requires rate limiting. Lacking these restrictions leaves servers vulnerable to credential-stuffing bots and distributed denial-of-service attacks, which drive up cloud computing bills.

Conclusion

Integrating baseline security controls during the initial design phase consumes fewer engineering hours than retrofitting a live application. Conducting a threat assessment and classifying data sensitivity establishes architectural boundaries before code deployment. Launching a system without defined security requirements introduces structural deficits. Remediating these deficits necessitates migrating databases, rewriting authentication logic, and scheduling system downtime. Defining compliance requirements early limits legal exposure and reduces subsequent remediation expenses.