Startup Founders' Guide: Guidelines for GLBA-Compliant AI Cloud Architecture in Financial Services

Building AI-driven FinTech requires GLBA-compliant architecture from day one. Here's how to structure cloud infrastructure that satisfies SEC oversight without rewriting later.

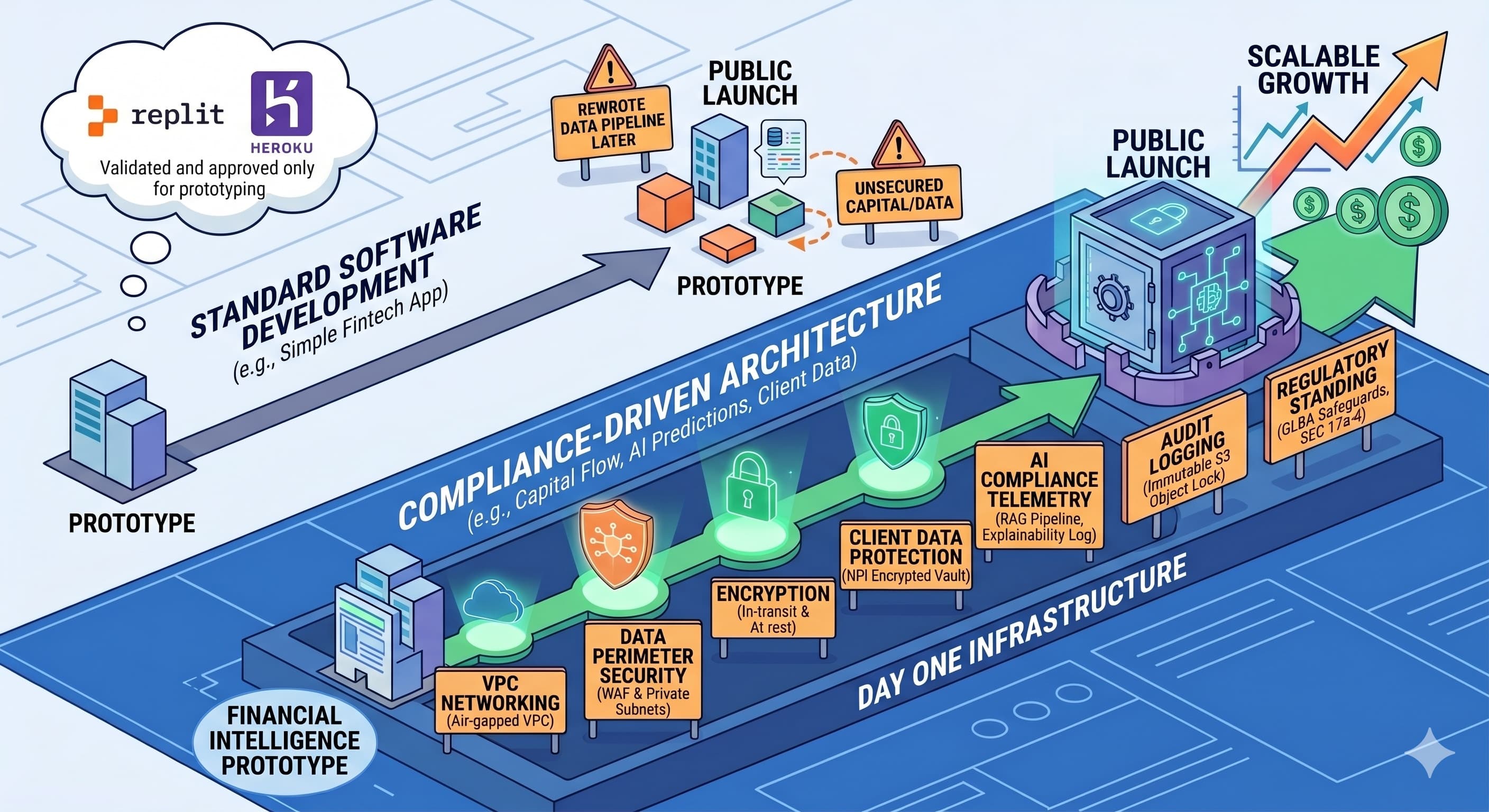

Digital financial products require compliance-driven architecture rather than standard software development practices, especially when AI is introduced. This guide breaks down the technical complexities of GLBA compliance and SEC principles-based rules into plain English. Whether you are validating a financial intelligence prototype or launching to the public, setting up the right infrastructure from day one protects client wealth data, shields your regulatory standing, and ensures your FinTech startup can scale safely without having to rewrite its data pipeline later.

1. Executive Summary: The Shifting Regulatory Landscape

A financial analysis application requires machine learning capability and adherence to the GLBA Safeguards Rule and modern SEC enforcement frameworks. It is important to note that the SEC's prescriptive Predictive Data Analytics (PDA) rule proposal was formally withdrawn in June 2025. Today, the SEC's oversight of artificial intelligence is rooted in principles-based rules surrounding materiality and traditional anti-fraud statutes. The primary enforcement focus is on prosecuting fraudulent misrepresentations of AI capabilities, commonly known as "AI-washing".

The regulatory scope and architectural complexity depend on the financial functions your application executes. If your product is a simple personal finance tool that merely displays read-only data or sends basic bill reminders, the compliance scope is relatively narrow. However, the moment your application crosses the threshold into actively moving capital, executing trades, or using AI to generate predictive market forecasts, you trigger oversight by bodies such as the SEC and FINRA. These operations require features from day one, including real-time algorithmic explainability, data-provenance tracking, and telemetry to demonstrate that your AI operates in the client's best interests.

The Limits of Platform-as-a-Service (PaaS)

Crossing this regulatory threshold is why developer-friendly platforms like Replit, standard Heroku, or basic Vercel, while suited for rapid prototyping and standard software, fall short for the core backend of a compliant financial application. These Platform-as-a-Service (PaaS) providers are designed to make deployment easy by abstracting away the underlying network infrastructure. However, GLBA and SEC rules require you to have granular control over that network layer. You cannot build a deeply nested, air-gapped Virtual Private Cloud (VPC) with strict subnet isolation, nor can you enforce SEC 17a-4 immutable hardware-level storage, in a standard multi-tenant PaaS environment.

An important caveat: This does not mean you must abandon modern developer tools entirely. You can (and many FinTechs do) host your decoupled, stateless frontend UI on enterprise-tier Vercel, provided it is strictly configured never to cache Nonpublic Personal Information (NPI) at the edge. But the actual "vault" and "brain" of your application, the encrypted databases, the AI inference engines, and the centralized audit logs, must reside within the highly configurable, deeply isolated walls of bare-metal cloud providers such as AWS or GCP.

The Architecture Dilemma: Distributed vs. Consolidated

Deciding whether to use a distributed architecture (e.g., hosting your UI on Vercel while your backend runs on AWS) or a consolidated architecture (keeping everything within a single cloud provider) involves trade-offs in a strictly regulated environment.

-

The Distributed Approach (PaaS Frontend + Cloud Backend): The primary advantage of splitting the stack is user experience and sustained developer velocity. By utilizing AI coding environments like Claude Code to generate and manage the codebase, frontend engineering teams can iterate rapidly on platforms purpose-built for UI frameworks without getting bogged down by boilerplate compliance configurations. These AI assistants can swiftly scaffold the complex cross-origin authentication and strict edge blocking required to protect NPI, maintaining high development momentum even within tight regulatory guardrails. However, the administrative compliance burden continues to increase. You are expanding your vendor risk surface, which means managing multiple legal Data Processing Agreements (DPAs) and conducting multiple vendor audits.

-

The Consolidated Approach (All-in AWS or GCP): Keeping your entire application, from user interface hosting (e.g., AWS S3 and CloudFront) to the AI inference backend, under one roof simplifies compliance. You benefit from a unified Identity and Access Management (IAM) framework, a single closed-loop network boundary, centralized logging, and a single primary cloud vendor to assess GLBA compliance. The main drawback is developer friction. Deploying modern frontend frameworks natively on infrastructure-heavy clouds can be clunkier, requiring more manual configuration and potentially slowing down your UI iteration cycles compared to the seamless experience of a dedicated PaaS.

Why this matters: Building a standard app, adding an AI API, and attaching security controls before launch fails to meet GLBA and SEC requirements. Baking strict financial privacy standards and algorithmic transparency into the foundation of your technology ensures you are legally protected from day one. If a firm claims its AI operates securely but lacks the architectural telemetry to prove the prompt-and-response chain, it is vulnerable to SEC enforcement under anti-fraud statutes.

2. Core Infrastructure: The "Air-Gapped" Analytics Perimeter

A compliant cloud architecture must utilize strict network segmentation to ensure that databases housing NPI and the AI inferencing engines processing that data are never directly exposed to the public internet.

-

The Web Application Firewall (WAF): All incoming client traffic must pass through a cloud-native WAF (such as AWS WAF or Google Cloud Armor) to filter out malicious traffic, SQL injection, and DDoS attacks before reaching the API layer.

-

Virtual Private Cloud (VPC) & Subnet Isolation: The architecture must be deployed within a tiered Virtual Private Cloud (VPC) model.

-

Public Subnets: Reserved exclusively for load balancers and NAT gateways.

-

Private Subnets: The Application Layer (API servers) operates here, accessible only via the public load balancers.

-

Isolated Data & AI Subnets: Both the Database layer and the AI/ML inferencing clusters operate in deeply nested private subnets. They must have no route to the public internet and should rely on VPC Endpoints (such as AWS PrivateLink) to securely access other cloud services without traversing the public internet.

-

3. Data Protection, Cryptography, and AI Sanitization

Standard database deployments are insufficient for FinTech. Every layer of the data lifecycle requires cryptographic protection, and AI integration introduces new vectors for data leakage.

-

Encryption in Transit & at Rest: The architecture must utilize Transport Layer Security (TLS 1.2 or TLS 1.3) for data in transit and a managed Key Management Service (KMS) for data at rest. This aligns with the FTC's encryption mandate under 16 CFR 314.4(c)(3).

-

Strict IAM Cryptographic Controls: Merely utilizing KMS is insufficient if Identity and Access Management (IAM) roles are misconfigured. Under the GLBA Safeguards Rule, customer information is legally considered unencrypted if an unauthorized person simultaneously accesses the ciphertext and the corresponding encryption key.

-

Secure Database Identifiers: Avoid traditional sequential integer IDs, which are vulnerable to Insecure Direct Object Reference (IDOR) attacks. The architecture must use randomized identifiers (such as UUIDv4) to prevent malicious actors from enumerating account records.

-

AI Training vs. Inferencing Walls: NPI must never be ingested into a machine learning model's base training weights unless it is rigorously anonymized. The architecture must utilize Retrieval-Augmented Generation (RAG). In a RAG architecture, the foundational AI model remains static, and the system retrieves relevant, encrypted context to feed temporarily into the AI's context window before discarding it immediately. This ensures NPI is processed in memory without permanently baking the data into neural weights.

4. Application Layer, Transparency, and Anti-Fraud Defense

Decoupling the frontend interface from the backend API introduces specific compliance requirements, especially when AI generates market insights.

-

Edge Network Liability (CDNs): If the frontend application is distributed globally via Content Delivery Networks, architects must ensure no NPI or sensitive market forecasts generated by the AI are ever cached on these edge servers.

-

Defending Against AI-Washing: Under the current SEC regime led by Chairman Paul S. Atkins, financial firms must defend against claims of "AI-washing" by ensuring their architectural telemetry proves their AI claims are materially accurate. Furthermore, the SEC's Investor Advisory Committee (IAC) recommends that AI-related disclosures be integrated into existing Regulation S-K items, detailing board oversight and material effects on operations.

-

Stateless Authentication: The API should use stateless, short-lived JSON Web Tokens (JWT) for secure session management.

5. Audit Logging & Modernized SEC 17a-4 Compliance

Under SEC Rule 17a-4, a system must be able to forensically prove who looked at a client's data and ensure records cannot be tampered with. However, the rigid technological mandates of the past have evolved.

-

The Audit-Trail Alternative: While Write-Once-Read-Many (WORM) storage remains compliant, an amendment effective January 3, 2023, introduced an "audit-trail alternative". Under this modernized framework, firms may elect to preserve records on an electronic system that maintains a complete, time-stamped history capturing all modifications, deletions, and operator identities.

-

Modern Cloud Logging (Amazon CloudWatch): To satisfy these SEC requirements and GLBA logging mandates, the default architectural standard should be Amazon CloudWatch Logs. Note that AWS CloudTrail Lake is being deprecated and closed to new customers starting May 31, 2026. CloudWatch unifies compliance data, supports the Open Cybersecurity Schema Framework (OCSF), and allows for standardized log processing.

-

Absolute Immutability (WORM): If a financial institution requires strict WORM storage (e.g., for finalized client trade confirmations), CloudWatch alone is insufficient. The architecture must utilize Amazon S3 Object Lock configured specifically in Compliance Mode. In Compliance Mode, the retention period is absolute, and no user can delete or overwrite the object. Organizations using GCP can achieve this utilizing the Cloud Storage Bucket Lock feature.

-

AI Interaction Logging: The system must log every prompt submitted to the AI and the exact output generated. This serves as the primary legal evidence to prove the prompt-and-response chain during regulatory audits.

6. The Expanded GLBA Safeguards Rule Mandates

The FTC has expanded the GLBA Safeguards Rule. Conceptual security alone does not satisfy current requirements; technical architecture must support highly prescriptive administrative actions enforced in 2024.

-

The 500-Consumer Breach Threshold: Financial institutions are legally obligated to notify the FTC within 30 days of discovering a security breach involving the unauthorized acquisition of unencrypted customer information belonging to at least 500 consumers. Without comprehensive VPC Flow Logs and data-plane access logs, a firm cannot legally prove that an unauthorized viewer failed to download data, which triggers an automatic presumption of an "unauthorized acquisition".

-

Continuous Monitoring and Technical Testing: The Safeguards Rule requires information systems to undergo either continuous monitoring or rigorous periodic testing. If continuous monitoring is not utilized, the firm must conduct annual penetration testing alongside comprehensive vulnerability assessments at least every six months, as well as whenever there are material changes to operations.

-

The "Qualified Individual": Financial institutions must formally designate a "Qualified Individual" (e.g., an internal employee or a vCISO) responsible for the security program. This individual must report in writing at least annually to the board of directors detailing the program's status and compliance posture.

7. Legal Infrastructure: Vendor Risk and DPAs

Technical architecture cannot be separated from legal compliance. The cloud provider's shared responsibility model dictates that simply hosting on AWS or GCP does not automatically make an application compliant.

-

Cloud Service Provider Undertakings: Historically, broker-dealers needed an independent Designated Third Party (D3P) to guarantee SEC access to records. Modernized rules now permit an "Alternative Undertaking" specifically tailored for Cloud Service Providers (CSPs). Major cloud providers like AWS and Google Cloud offer streamlined Addendums that allow them to facilitate SEC access directly, bypassing expensive third-party consultants. Alternatively, a firm may appoint an internal Designated Executive Officer (DEO).

-

AI Vendor Zero-Data-Retention Agreements: If your platform uses third-party Large Language Model APIs, you must secure enterprise-level Data Processing Agreements guaranteeing "zero-data-retention". These legally bind the AI provider to process data ephemerally, prohibiting them from retaining NPI or training their future models on your clients' prompts.

8. Cost and Complexity: The True Economics of Compliance

Founders require accurate financial benchmarking. Building an AI-driven, compliant FinTech product requires more resources than developing a standard web application.

A true "compliance-driven" FinTech MVP capable of passing due diligence must be "Audit-Ready" from the first line of code. In 2026, building a complex AI platform MVP featuring LLM integration, RAG pipelines, and strict SEC/GLBA telemetry generally requires an initial build cost ranging from $140,000 to well over $300,000.

By comparison, a lean FinTech MVP, such as a basic registration app testing market demand with minimal integrations, which can often be launched for $15,000 to $55,000. The wide variance in cost is driven by the reality that an advanced AI financial platform cannot cut corners to save money. The higher budget directly funds the specialized engineering required to deploy active-active multi-region clusters, secure vector databases for LLMs, rigorous KYC/AML identity verification flows, and the foundational compliance controls necessary to pass institutional due diligence or secure state money transmitter licenses.

Furthermore, founders must account for the ongoing operational "compliance tax". Retaining vast quantities of immutable audit logs, paying for high-volume AI inferencing tokens, scheduling mandatory penetration testing, and conducting continuous security monitoring realistically incurs ongoing monthly expenses ranging from $3,000 to $15,000+. These ongoing costs offset the financial exposure of regulatory penalties and breach liability.

Conclusion: The Stakes of Getting It Wrong

As a founder in the financial technology space, testing a "quick and dirty" prototype or deploying an AI market analysis app that bypasses these strict cloud architectural rules carries direct legal liability under GLBA and SEC anti-fraud statutes.

A data breach or algorithmic compliance failure can lead to FTC fines under the GLBA, loss of operating capital sufficient to halt the business, and SEC enforcement actions for material misrepresentations or fiduciary breaches. For founders in finance, losing SEC registration or FINRA standing typically prevents continued work in the industry. By building your application on a secure, compliant, and transparent cloud architecture from the very beginning, you protect client portfolios, professional licenses, and the startup's continued operation.